Executive Summary

The hiring process is a critical gateway to economic opportunity, determining who can access consistent work to support themselves and their families. Employers have long used digital technology to manage their hiring decisions, and now many are turning to new predictive hiring tools to inform each step of their hiring process.

This report explores how predictive tools affect equity throughout the entire hiring process. We explore popular tools that many employers currently use, and offer recommendations for further scrutiny and reflection. We conclude that without active measures to mitigate them, bias will arise in predictive hiring tools by default.

Key Reflections:

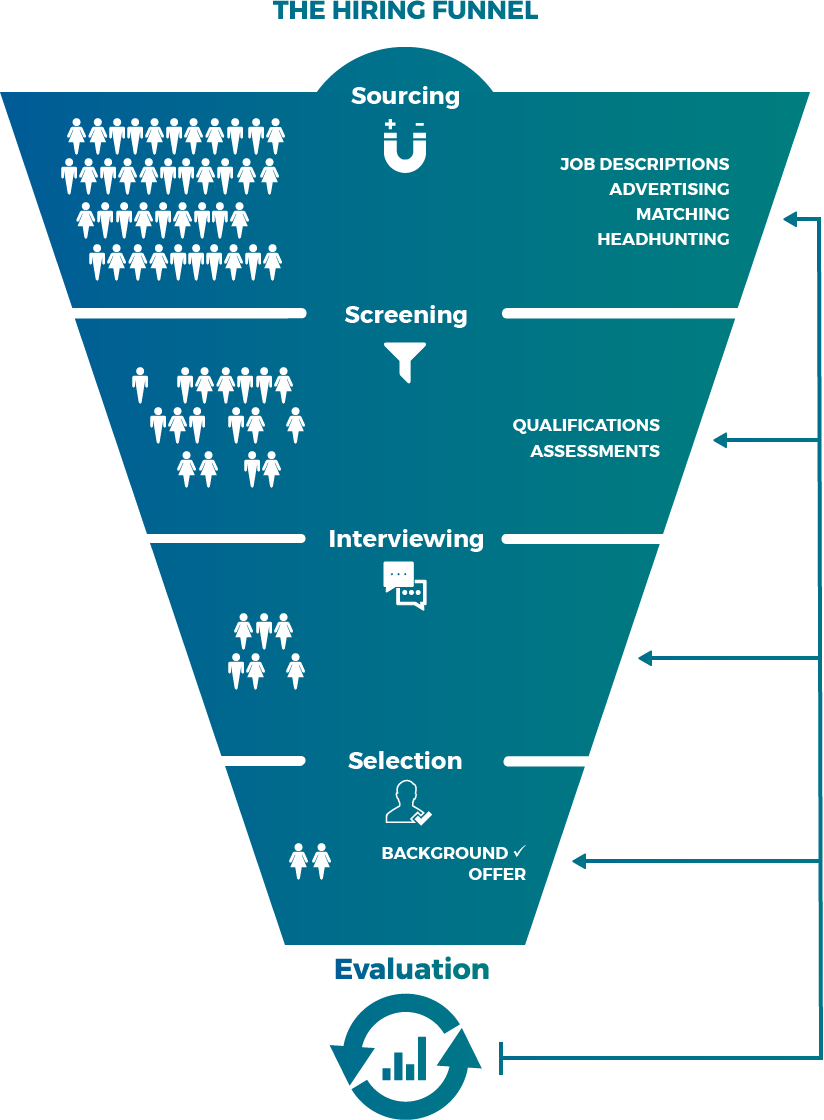

Hiring is rarely a single decision point, but rather a cumulative series of small decisions. Predictive technologies can play very different roles throughout the hiring funnel, from determining who sees job advertisements, to estimating an applicant’s performance, to forecasting a candidate’s salary requirements.

While new hiring tools rarely make affirmative hiring decisions, they often automate rejections. Much of this activity happens early in the hiring process, when job opportunities are automatically surfaced to some people and withheld from others, or when candidates are deemed by a predictive system not to meet the minimum or desired qualifications needed to move further in the application process.

Predictive hiring tools can reflect institutional and systemic biases, and removing sensitive characteristics is not a solution. Predictions based on past hiring decisions and evaluations can both reveal and reproduce patterns of inequity at all stages of the hiring process, even when tools explicitly ignore race, gender, age, and other protected attributes.

Nevertheless, vendors’ claim that technology can reduce interpersonal bias should not be ignored. Bias against people of color, women, and other underrepresented groups has long plagued hiring, but with more deliberation, transparency, and oversight, some new hiring technologies might be poised to help improve on this poor baseline.

Even before people apply for jobs, predictive technology plays a powerful role in determining who learns of open positions. Employers and vendors are using sourcing tools, like digital advertising and personalized job boards, to proactively shape their applicant pools. These technologies are outpacing regulatory guidance, and are exceedingly difficult to study from the outside.

Hiring tools that assess, score, and rank jobseekers can overstate marginal or unimportant distinctions between similarly qualified candidates. In particular, rank-ordered lists and numerical scores may influence recruiters more than we realize, and not enough is known about how human recruiters act on predictive tools’ guidance.

Recommendations:

Vendors and employers must be dramatically more transparent about the predictive tools they build and use, and must allow independent auditing of those tools. Employers should disclose information about the vendors and predictive features that play a role in their hiring processes. Vendors should take active steps to detect and remove bias in their tools. They should also provide detailed explanations about these steps, and allow for independent evaluation.

The EEOC should begin to consider new regulations that interpret Title VII in light of predictive hiring tools. At a bare minimum, the agency should issue a report that further explores these issues, including a candid reflection on the capacity of current regulatory guidance to account for modern hiring technologies.

Regulators, researchers, and industrial-organizational psychologists should revisit the meaning of “validation” in light of predictive hiring tools. In particular, the value of correlation as a signal of “validity” for antidiscrimination purposes should be vigorously debated.

Digital sourcing platforms must recognize their growing influence on the hiring process and actively seek to mitigate bias. Ad platforms and job boards that rely on dynamic, automated systems should be further scrutinized–both by the companies themselves, and by outside stakeholders.

Introduction

The hiring process is a critical gateway to economic opportunity, determining who can access consistent work to support themselves and their families. Employers have long used digital technology to manage their hiring decisions, and now many are turning to new predictive hiring tools to inform each step of their hiring process.

Today, employers like Target, Hilton, Cisco, PepsiCo, Amazon, and Ikea, along with major staffing agencies, are testing and adopting data-driven, predictive tools. With increasing public attention on “artificial intelligence” and emerging popularity of the technology in the employment context, these tools are simultaneously touted for their potential to reduce bias in hiring and vigorously derided for their capacity to exacerbate it. As predictive technologies continue to proliferate throughout the hiring process—for both low-wage, low-skilled jobs and higher wage, white collar positions—it is critical to understand what types of tools are currently being used and how they work, as well as how they may advance or reduce equity.

Hiring is rarely a single decision—it is a series of smaller, sequential decisions.

Hiring is rarely a single decision, but rather a series of smaller, sequential decisions that culminate in a job offer—or a rejection. Hiring technologies can play very different roles throughout this process. For example, in the early stages of recruiting, automated predictions can steer job advertisements and personalized job recommendations to jobseekers from particular demographic groups. Once candidates have applied, algorithms help recruiters assess and quickly disqualify candidates, or prioritize them for further review. Some tools engage candidates with chatbots and virtual interviews, and others use game-based assessments to reduce reliance on traditional (and often structurally biased) factors like university attendance, GPA, and test scores. At each stage, predictive technologies can have a powerful effect on who ultimately succeeds in the hiring process.

“In the case of systems meant to automate candidate search and hiring, we need to ask ourselves: What assumptions about worth, ability and potential do these systems reflect and reproduce? Who was at the table when these assumptions were encoded?”

Meredith Whittaker, Executive Director, AI Now Institute

This report explores how predictive tools are integrated throughout the hiring process. These tools are commonly referred to as “hiring algorithms,” or “artificial intelligence,” but we have chosen to use the frame of “prediction” to remove needless complexity and mystique. Simply put, predictive tools aim to forecast outcomes and behavior by analyzing existing data.

In preparing this report, we attended industry conferences to learn how hiring professionals understand their own work, and how talent acquisition technology vendors frame their offerings. We reviewed technical and interdisciplinary research to situate modern hiring tools within the evolving landscape of both the hiring industry and artificial intelligence technologies. We studied the features, technical specifics, and interfaces of key predictive hiring products. Finally, we closely analyzed vendors’ marketing and research materials, public statements and presentations, and product documentation.

In the first part of this report, we summarize some important background and key concepts: the history of hiring technologies since the 1990s, incentives driving employers to adopt hiring technologies, a conceptual framework for assessing equity (especially those beyond interpersonal biases), and basic U.S. legal and regulatory context. Next, we outline the four stages of the classic hiring process: sourcing, screening, interviewing, and selection. We explore popular predictive technologies used at each stage, analyzing their promises and pitfalls. In closing, we offer reflections and recommendations.

Key Concepts

This section offers background and concepts needed to fully engage with the remainder of this report. First, we outline the evolution of hiring technologies since the advent of the internet, describe how the machine learning techniques used by many of today’s predictive tools work, and identify the primary reasons employers adopt new technologies. Next, we articulate several different kinds of social bias, and explain common ways that predictive tools can absorb and compound them. Finally, we briefly summarize relevant U.S. law and policy, highlighting areas of ambiguity.

A History of Hiring Technology

Hiring technology has evolved rapidly alongside the internet. As early as the 1990s, online job boards like Monster.com capitalized on the new medium by offering employers digital job listings at rates well below those of newspaper classified ads. Search engines for these online job postings emerged soon after, and pay-per-click advertising helped recruiters compete for attention in a newly crowded online market.

Next came new ways to apply for jobs over the internet, triggering a jump in the volume of applications for open positions as it became easier to apply for multiple jobs. The resulting deluge of applicants–many of whom lacked employers’ desired qualifications–prompted employers to adopt applicant tracking systems to help both organize and evaluate rapidly growing pools of candidates.

Meanwhile, recruiters began using digital technology to proactively seek out desirable applicants. By scouring new, public sources of information (like professional profiles and work samples on emerging platforms like LinkedIn), recruiters were able to broaden their focus from “active” candidates–those proactively exploring or applying to open roles–to “passive” ones, who had desirable qualifications but no apparent intention to switch jobs.

As the quantity of potential job candidates ballooned further to include both higher volumes of active applicants as well as millions of passive ones, some employers began turning to new screening tools to keep up. While employers had long relied on tests and assessments to screen jobseekers, the development of new techniques to collect and analyze data prompted the introduction of more advanced assessments.

In response to the growing push for diversity and inclusion (D&I) in the workplace, some technology vendors have more recently introduced tools to facilitate diversity recruiting and reduce various biases endemic to the hiring process. Some vendors offer entire products geared primarily or exclusively for diversity recruiting, while others incorporate features catering to those goals.

Today, hiring technology vendors increasingly build predictive features into tools that are used throughout the hiring process. They rely on machine learning techniques, where computers detect patterns in existing data (called training data) to build models that forecast future outcomes in the form of different kinds of scores and rankings. This new wave of hiring technology resembles popular consumer services like Google’s search engine, Netflix’s personalized movie recommendations, and Amazon’s Alexa assistant, as well as advanced marketing and sales tools like Salesforce.

Employers turn to hiring technology to increase efficiency, and in hopes that they will find more successful–and sometimes, more diverse–employees. For many employers, such tools are a basic part of doing business in the digital age. Understanding employers’ motivations to adopt these tools is helpful to make sense of the context in which they are used.

Most employers want to reduce time to hire, the amount of time it takes to fill an open position. It takes a typical U.S. employer six weeks to fill a role, and the longer it takes to find a suitable candidate, the more time and resources are diverted from other priorities. A slow hiring process might lead to a poor applicant experience and increase the likelihood that candidates will drop out of the hiring process or share their bad experience with friends. Employers also fear losing candidates to their competitors–a particularly acute concern in a tight job market. Moreover, some companies have seasonal staffing needs that make it critical to hire new employees within a particular time frame.

Employers also want to reduce cost per hire, or the marginal cost of adding a new worker, which is roughly $4,000 in the U.S. According to research from LinkedIn, 35 percent of companies feel significantly constrained by limited recruitment budgets, and most don’t expect an improvement in the coming year, even as many anticipate an increase in hiring volume.

Employers also try to maximize quality of hire, which is judged based on metrics related to performance evaluations, the quantity or quality of worker output, or whether the hire was eventually promoted or disciplined. Inversely, employers might also aim to avoid hiring “toxic” employees, to prevent theft, or even to forestall labor organizing activities. Many employers also look to maximize the tenure of their workers, presuming that “successful” hires will stay longer than less successful ones. Long tenure is seen as a simple, quantifiable signal of a high-quality hire, while brief tenure can be interpreted as the sign of a “bad fit.” Turnover is costly, requiring an employer to hire and train new workers.

Finally, some employers have goals for workplace diversity, based on gender, race, age, religion, disability, or veteran or socioeconomic status. They may be drawn toward hiring tools that purport to help avoid discriminating against applicants in protected categories, or that appear poised to proactively diversify their workforce. Hiring vendors of all stripes claim they can help employers achieve these goals.

Equity: Beyond Interpersonal Bias

Hiring tool vendors often tout technology’s potential to remove bias from the hiring process. They argue that by making hiring more consistent and efficient, recruiters will be empowered to make fairer and more holistic hiring decisions, or that their tools will naturally reduce bias by obscuring applicants’ sensitive characteristics. But, as we explain below, vendors are usually referring to interpersonal human prejudice, which is only one source of bias. Institutional, structural, and other forms of bias are just as important, if not more important, aspects of any equity analysis when it comes to employment.

Different Dimensions of Bias

In common parlance, the term “bias” is often used to refer to interpersonal bias–prejudices held by individual people, whether implicitly or explicitly. Interpersonal bias against people of color, women, and other marginalized groups has long plagued the hiring process. To this day, many hiring managers evaluate candidates in ways that contribute to disparate hiring outcomes, leading to underrepresentation and pay disparities in roles across industries. But other, more structural kinds of bias also act as barriers to opportunity for jobseekers, especially when predictive tools are involved.

Institutional, structural, and other forms of bias are critical aspects of any equity analysis, especially when it comes to employment.

Bias arises at the institutional level when policies and workplace cultures serve to benefit certain workers and disadvantage others. For example, a business that rewards men for acting ambitiously but punishes women for the same behavior will lead to situations where men are seen as more successful employees. Likewise, a company that tends to hire from a privileged and homogeneous community and then uses “culture fit” as a factor in hiring decisions could end up methodically rejecting otherwise qualified candidates who come from more diverse backgrounds.

Hiring practices can also perpetuate systemic (or “structural”) biases: patterns of disadvantage stemming from contemporary and historical legacies such as racism, unequal economic opportunity, and segregation. For example, many white collar employers place a high value on elite university attendance, but despite changing admissions policies, such a credential is still disproportionately attained by privileged individuals, and often out of reach for those who lack access to quality primary and secondary education. Without proactive steps to account for these realities, even seemingly objective hiring criteria like one’s alma mater or test performance can end up reflecting systemic biases.

Biases can also be internalized by jobseekers themselves, influencing their own behaviors, such as whether or not to apply for a given job. Moreover, within and across all of these categories, the intersection of multiple identities can compound disadvantage in ways that are often overlooked. For instance, a black woman jobseeker may be judged more harshly than other women because of her race, while at the same time find it harder to access opportunities than black men because of gender-based discrimination. The treatment of intersectionality in employment law is far from settled, and their manifestation in the digital realm is only beginning to be studied.

The types of bias described above can exist and emerge in predictive hiring tools in several distinct ways.

First, when the training data for a model is itself inaccurate, unrepresentative, or otherwise biased, the resulting model and the predictions it makes could reflect these flaws in a way that drives inequitable outcomes. For example, an employer, with the help of a third-party vendor, might select a group of employees who meet some definition of success–for instance, those who “outperformed” their peers on the job. If the employer’s performance evaluations were themselves biased, favoring men, then the resulting model might predict that men are more likely to be high performers than women, or make more errors when evaluating women. This is not theoretical: One resume screening company found that its model had identified having the name “Jared” and playing high school lacrosse as strong signals of success, even though those features clearly had no causal link to job performance.

Predictive models can reflect biases in other subtle and powerful ways, which can be difficult to detect and correct. For example, in one well-known case, an employer who wanted to maximize worker tenure found that distance from work was the single most important variable that determined how long workers stayed with the employer–but it was also a factor that strongly correlated with race. Since many social patterns related to education and work reflect troubled legacies of racism, sexism, and other forms of socioeconomic disadvantage, blindly replicating those patterns via software will only perpetuate and exacerbate historical disparities. These patterns can also emerge as tools are used, particularly when models are built to learn and adapt to the preferences of its users over time. Importantly, removing or obscuring sensitive factors like gender and race will not prevent predictive models from reflecting patterns of bias.

Removing or obscuring sensitive factors like gender and race will not prevent predictive models from reflecting patterns of bias.

Second, people can be unduly influenced by computerized recommendations. Separate from the mechanics of prediction itself, predictive hiring tools can create new opportunities for cognitive bias as they display information to human recruiters. A phenomenon known as automation bias occurs when people “give undue weight to the information coming through their monitors.” When predictions, numerical scores, or rankings are presented as precise and objective, recruiters may give them more weight than they truly warrant, or more deference than a vendor intended. Moreover, when tools reveal job candidates’ pictures or other demographic features, these interfaces could also subconsciously affect recruiters’ decisions.

A variety of other equity concerns can also be implicated by the technical design and interface of hiring software. For one, candidates with limited internet access or skills, or those with disabilities, may face distinct challenges using online job platforms, which can in turn influence a system’s judgement of their suitability and lead to further exclusion. Additionally, the collection, structure, and labeling of underlying data can impose rigid or exclusionary definitions of identity. For instance, tools that classify applicants into “male” and “female” categories–even for the affirmative purpose of monitoring for gender equality–could end up marginalizing queer, transgender, and non-binary people, while tools that classify people by race reify political categories that “by their very nature mark a status inequality.”

Without active measures to mitigate them, biases will arise in predictive hiring tools by default.

Without active measures to mitigate them, biases will arise in predictive hiring tools by default. But predictive tools could also be turned in the other direction, offering employers the opportunity to look inward and adjust their own past behavior and assumptions. This insight could also help inform data and design choices for digital hiring tools that ensure they promote diversity and equity goals, rather than detract from them. Armed with a deeper understanding of the forces that may have shaped prior hiring decisions, new technologies, coupled with affirmative techniques to break entrenched patterns, could make employers more effective allies in promoting equity at scale.

Law and Policy: Antidiscrimination and Ambiguities

This section offers a brief overview of key U.S. laws and regulations related to discrimination in hiring. The most pertinent law, Title VII of the Civil Rights Act of 1964, broadly prohibits hiring discrimination by employers and employment agencies on the basis of certain protected characteristics. But there are ambiguities about how this law applies to predictive hiring technology. A range of other state and federal laws and rules are also relevant to assessing and overseeing predictive hiring tools.

Key U.S. Statutes and Regulations

Title VII of the Civil Rights Act of 1964 forbids employers from discriminating on the basis of race, color, religion, sex, and national origin. The law seeks to “achieve equality of employment opportunities and remove barriers that have operated in the past to favor … white employees over other employees.” Its provisions extend broadly to advertising, hiring, compensation, terms, conditions, and privileges of employment. Other federal legislation has extended similar protections to older people and people with disabilities.

More specifically, Title VII makes it unlawful for employers and employment agencies to “limit, segregate, or classify … employees or applicants for employment in any way which would deprive or tend to deprive any individual of employment opportunities or otherwise adversely affect [^them]” because of their protected class status. Title VII is conventionally understood to prohibit two kinds of discrimination: disparate treatment and disparate impact. Disparate treatment cases involve overt discrimination, whereas disparate impact covers employment practices that are facially neutral but have a discriminatory effect.

Because disparate impact is often the theory invoked to address harms brought about by predictive tools, the mechanics of a disparate impact case deserve further explanation. To prevail in a disparate impact case, a complainant must first make some showing that an employment practice has a disparate impact on the basis of a protected characteristic. Next, an employer can counter by showing a valid “business necessity”–for example, some amount of evidence that the practice was “job-related,” or that it accurately measured an applicant’s ability to perform on the job. If the employer is successful in making its case, the complainant then must show the existence of a “less discriminatory alternative,” such as another kind of test or procedure that would serve the employer’s legitimate interest while having less of a harmful effect on protected groups.

The Equal Employment Opportunity Commission (EEOC) is the federal agency charged with enforcing federal laws related to employment discrimination. In practice, the EEOC does not typically investigate discrimination except when an individual makes a specific complaint. After such a complaint has been filed, the EEOC can open an investigation, and has a broad right to access relevant evidence. The EEOC also periodically issues guidance and regulations, incorporating input from public meetings, discussion, and comments.

Additional legal and regulatory requirements apply to federal contractors, companies and organizations that provide services or products to a government agency, including healthcare providers, universities, technology companies, hotels, and airlines. Such contractors employ a significant portion of the U.S. workforce. These requirements are overseen by the Office of Federal Contract Compliance Programs (OFCCP).For example, Executive Order 11246 requires that most government contractors take “affirmative action” to ensure that equal opportunity is provided in all aspects of their employment, including recruiting–a requirement that goes beyond the basic requirements of Title VII. Contractors are also required to solicit the race, gender, and ethnicity of job applicants, including “internet applicants,” to enable regulatory research and enforcement.

Finally, a range of other federal, state and local laws are relevant to predictive hiring tools. Laws like the Genetic Information Nondiscrimination Act of 2008 anticipated the risk of employers turning to newly available–and highly sensitive–sources of data to inform hiring decisions. Some cities and states have expanded protections to characteristics not explicitly covered by Title VII, like gender identity, sexual orientation, citizenship status, and political affiliation. Equal pay and salary history laws promote equitable compensation.In other countries, particularly in Europe, data protection laws like the General Data Protection Regulation (GDPR) play a significant role in determining what information and data processing techniques employers can use during the course of their recruitment activities.

Gaps and Ambiguities

The laws and regulations described above may not always apply to predictive technologies. First, it is not obvious that hiring technology vendors are themselves covered by Title VII. The statute does cover employment agencies–entities that “procure employees for an employer”–but many vendors would argue they merely provide products and services to employers and ought not be liable for employers’ ultimate use. Second, while Title VII covers employment advertising and applicant sourcing, the EEOC has offered “only minimal guidance in this area,” and only a handful of legal cases have considered these statutory provisions. However, courts have found that advertising campaigns can trigger disparate impact liability, and have been willing to analyze the broader context of an employer’s recruitment ad campaign, not just an ad’s content.

Current interpretations of the disparate impact doctrine are ill-suited to address bias that arises in machine learning models.

Importantly, current interpretations of the disparate impact doctrine are ill- suited to address bias that arises in machine learning models. For example, the EEOC’s Uniform Guidelines on Employee Selection Procedures, which have not been updated since their enactment in 1978, interpret Title VII to provide a “framework for determining the proper use of tests and other selection procedures.” The framework relies heavily on the notion of “validity studies” to demonstrate that a procedure is sufficiently related to or “significantly correlated with important elements of job performance.” Unfortunately, showing correlation does little to help assess whether a machine learning model is surfacing biases or not. Critics have called this kind of validity analysis “largely ill equipped” and “simply irrelevant” to assessing discrimination in the modern world of data mining.

For those discrimination claims that do end up in court, technology vendors may succeed in shielding themselves from close scrutiny.

Finally, investigation and enforcement under existing legal frameworks require complainants and regulators to be able to notice and bring about claims of machine-enabled discrimination, and to have the resources and ability to investigate and contest them. At present, many jobseekers may not realize they have been judged by a predictive technology, and even if they do, may not have sufficient access to the tool to describe its impact (or the resources to retain expert witnesses to do so), let alone propose a less discriminatory alternative. The EEOC is under-resourced, yet saddled with a long backlog of complaints, and so has little capacity to take on more complex investigations. For discrimination claims that do end up in court, technology vendors may succeed in shielding themselves from close scrutiny through trade secrecy and intermediary immunity claims, which have so far proven difficult to pierce even in cases where key rights and due process appear to have been undermined.

Hiring is rarely a single decision, but rather a funnel: a series of decisions that culminate in a job offer or a rejection. The hiring process starts well before anyone submits an actual job application, and jobseekers can be disadvantaged or rejected at any stage. Importantly, while new hiring tools rarely make affirmative hiring decisions, they often automate rejections.

While predictive hiring tools rarely make affirmative hiring decisions, they often automate rejections.

Employers start by sourcing candidates, attracting potential candidates to apply for open positions through advertisements, job postings, and individual outreach. Next, during the screening stage, employers assess candidates—both before and after those candidates apply—by analyzing their experience, skills, and characteristics. Through interviewing applicants, employers continue their assessment in a more direct, individualized fashion. During the selection step, employers make final hiring and compensation determinations.

The Hiring Funnel

Below, we explore how new predictive hiring tools are being used in each stage, describing and analyzing illustrative products on the market today. Not all products fit cleanly within one stage—some perform multiple roles behind a single interface, or blur the lines between previously distinct stages. After each description, we offer a brief equity analysis.

We do not attempt to map which employers are using which products. This is because employers can use multiple recruitment tools, often from third party vendors, to manage their hiring activities. Many of these tools can integrate with each other, making it easy for employers to mix and match products behind the scenes. In practice, while it is often obvious what primary applicant tracking system an employer uses (because it is usually visible when exploring a company’s job application portal), it can be nearly impossible to tell from the outside what additional tools—or customizations of those tools—an employer may be using to manage and assess applicants. Employers can even use different tools to assess applicants for different positions within the same firm, which would not be obvious unless someone applied to a variety of roles.

For these reasons, we can’t definitively say which tools are more commonly used to recruit for low-income jobs or service sector jobs, as compared to white collar positions. However, generally speaking, employers’ technology choices seem influenced at least as much by an employer’s size as by differences in job function or industry.

It is important to also note that the marketplace for hiring tools is extremely dynamic. Startups and emerging companies frequently launch new products, acquire one another, or are subsumed into enterprise human resource software companies. As a result, details about particular tools can quickly become outdated.

Recognizing this, we encourage the reader to treat the examples below as archetypes to help inform future investigation and analysis. These products were selected primarily for their capacity to exemplify notable and relevant features.

Sourcing

In the sourcing stage, employers seek out candidates to apply for their job opportunities. Predictive technologies help place and optimize job advertisements, notify jobseekers about potentially appealing positions, and identify candidates who may be poachable from a competitor or who may be enticed to rejoin the job market. Sourcing technologies can shape a candidate pool—for better or for worse—before applications ever change hands.

Job Descriptions

Almost every job opening starts with a job description—the title, framing, requirements, and specific wording used to describe a job opportunity. Job descriptions can powerfully influence who chooses to apply for a position. For example, research has found that job descriptions that rely on stereotypically male words tend to result in fewer female applicants.

One vendor called Textio offers tools to help employers adjust the text of their job descriptions to attract more applicants, and to promote more diverse applicant pools, particularly along gender lines.

Textio works by comparing linguistic patterns in the text of a job posting with historical applicant behavior and hiring outcomes, in order to predict the approximate size and demographics of the expected candidate pool. The tool assigns each job posting an overall score between 0 and 100, reflecting a prediction of how quickly a listing will fill compared to jobs in the same industry and location.

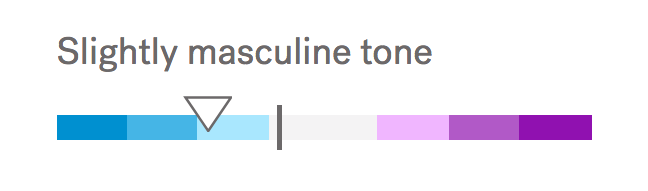

A separate “gender tone meter” claims to measure the extent to which language in the job description risks alienating applicants of either gender. This measure predicts the gender balance of applicants, given the proposed text.

Textio’s gender tone meter

Textio also assesses specific strengths and weaknesses of the job description (like length, complexity, and word choice) and suggests wording changes that would raise its score or improve its gender tone. As employers follow Textio’s suggestions, they can see how those changes could influence both the overall and gender tone scores in real time.

Textio’s real-time recommendations

Textio creates models that take into account the industry and location of the job, as well as in some cases, models that are unique to particular employers who use the service. It updates these models with new job descriptions and demographics of new applicants once a month.

. . .

Because job descriptions are usually a candidate’s first substantive touchpoint with a potential job opportunity, tools like Textio appear poised to help ameliorate gender biases within job descriptions. Textio is somewhat distinct among hiring technologies we observed, because it attempts to promote equity without making judgements about specific people. Even if the predictions they offer are imperfect, such tools still prompt employers to spend time trying to make their descriptions more inclusive.

Moreover, since a number of other predictive hiring products—from job ads to screening tools—rely on the words and phrases from job descriptions to inform their predictions about candidates’ suitability, more inclusive language in job postings can influence everything from who ends up seeing job ads to who is invited to interview.

Advertising

Many employers use paid digital advertising to put job opportunities in front a greater number of potential applicants. Today, employers have access to the same microtargeting, behavioral targeting, and performance-driven advertising tools as the broader e-commerce sector. How and where employers choose to use these tools plays an important role in determining the overall demographics of who learns about job postings and who ultimately applies.

How and where employers choose to advertise their jobs plays an important role in determining the overall demographics of an applicant pool.

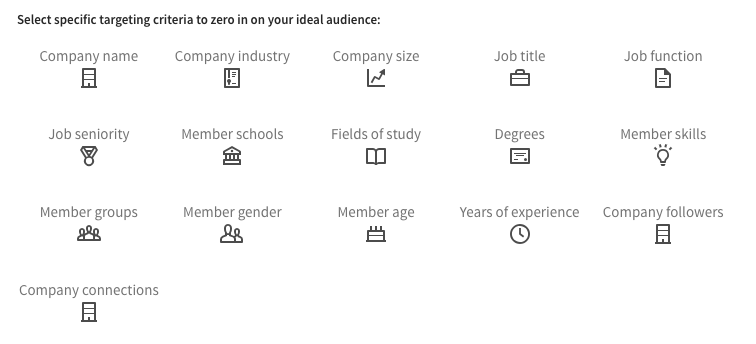

Different kinds of online ad platforms let employers target potential applicants in very different ways. Job board platforms offer employers the ability to promote their job postings to particular types of jobseekers. General purpose search engines allow employers to place their ads next to search queries, targeting users based on their search terms and geographic locations, among other factors. Social media sites allow employers to show ads that blend in with other social content, targeting based on a wide array of personal characteristics, including demographic data and inferred interests. And millions of individual websites and mobile apps let employers place ads alongside other web content, and can be targeted to users who share common features or interests using a wide range of data sources. This “display” ad space is available to employers en masse through centralized ad networks.

Many ad networks use data that is both provided by users and inferred from their online activity. The data is used to automatically generate groups of users with certain shared attributes that recruiters can then use to target (or exclude people from seeing) ads. In selecting targeting options, employers define which users are eligible—though not guaranteed—to see a given job opportunity.

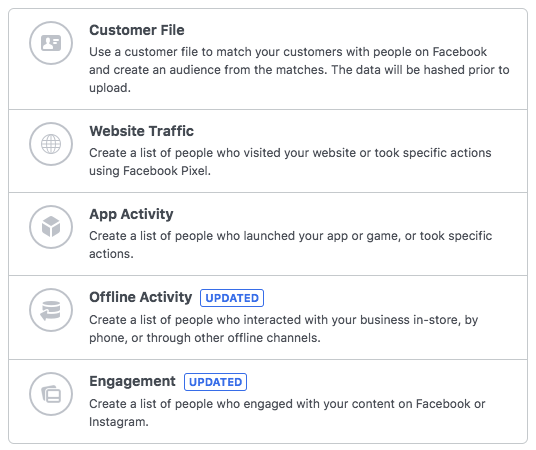

LinkedIn’s ad targeting options

Some platforms also offer employers the ability to target specific people, like people who previously visited an employer’s career website, or who began but did not complete an application.

Facebook Custom Audience targeting options

And many platforms, including Facebook, Google, and LinkedIn, offer advertisers the ability to serve ads to users who are predicted to be similar to those the employer initially wanted to reach.

Beyond advertisers’ own targeting choices, ad platforms themselves play a significant role in determining who within a target audience will actually see each ad. While employers may set initial targeting parameters, it is typically the case that advertising space is limited, and not everyone who is eligible to see an advertisement will ultimately have it presented to them. Platforms like Facebook and Google decide which ads are ultimately shown to whom, not only based on advertisers’ willingness to pay, but on the platforms’ own prediction of how likely a user is to engage with the ad (e.g., clicking on it) or to take another desired action (e.g., applying to the employer’s job on the company’s career website).

. . .

As legal scholar Pauline Kim has argued, “not informing people of a job opportunity is a highly effective barrier” to applying for that position. How employers advertise can sharply limit, or greatly expand, the types of people who even learn a job opportunity exists. The targeting and delivery techniques described above are powerful, commonplace tools of the recruitment trade. However, we worry that employers, ad platforms, and regulators do not yet fully appreciate their impact.

In particular, sourcing platforms that deliver ads based on optimizations derived from user behavior, such as the number of clicks or job applications, risk directing ads and notices away from demographics that are historically less likely to take those actions. This could narrow the universe of underrepresented groups who are even presented with opportunities.

The complexity and opacity of digital advertising tools make it difficult—if not impossible—for aggrieved jobseekers to spot discriminatory patterns of advertising in the first place.

The complexity and opacity of digital advertising tools make it difficult, if not impossible, for aggrieved jobseekers to spot discriminatory patterns of advertising in the first place. Even if they could, it is not always clear who can or should bear legal responsibility for advertising practices with discriminatory effect. In the offline world, advertisers have been held liable for unintentional advertising practices that “serve to freeze the effects of past discrimination." However, it is unclear whether advertisers would be aware of these effects, or whether ad platforms themselves can or will be held liable for various discriminatory advertising practices. This is a fast-evolving area ripe for both empirical research and legal interpretation.

Digital advertising can also play a clear role in promoting equity. For example, federal contractors, who are obligated to “take affirmative action to ensure equal employment opportunity," and other employers committed to diversity and inclusion, may want to proactively target underrepresented groups for their job ads and may legitimately need access to seemingly sensitive targeting categories or predictive targeting tools. Even so, U.S. legal guidelines about acceptable job advertising practices have yet to be updated to account for evolving digital tools.

Matching

Matching is the process of comparing job opportunities with prospective applicants, typically culminating in a ranked list of recommendations. For instance, jobseekers might see personalized job recommendations, while recruiters might receive a ranked list of potential candidates. Matching tools promise to connect the right applicants with the right job, but by the same token, they can silently hide certain opportunities from some candidates and suppress others from being seen by recruiters. Personalized job boards and other predictive matching technologies are popular among both employers and jobseekers, in some cases supplanting employment and staffing agencies.

ZipRecruiter is one prominent matching product. It is essentially an online job board with a range of personalized features for both employers and jobseekers. ZipRecruiter is a quintessential example of a recommender system, a tool that, like Netflix and Amazon, predicts user preferences in order to rank and filter information—in this case, jobs and job candidates. Such systems commonly rely on two methods to shape their personalized recommendations: content-based filtering and collaborative filtering. Content-based filtering examines what users seem interested in, based on clicks and other actions, and then shows them similar things. Collaborative filtering, meanwhile, aims to predict what someone is interested in by looking at what people like her appear to be interested in.

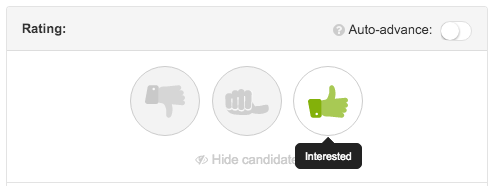

ZipRecruiter’s applicant rating interface

For example, on ZipRecruiter, employers can opt to give incoming applicants a “thumbs up.” As ZipRecruiter collects these positive signals, it uses a machine learning algorithm to identify other jobseekers in its system with similar characteristics to those who have already been given a “thumbs up”—who have not yet applied for that role—and automatically prompts them to apply. The details of the matching process make up ZipRecruiter’s special sauce, which considers not only basic demographic and skills information from resumes and other information added by jobseekers, but also insights gleaned from their behavior on the website.

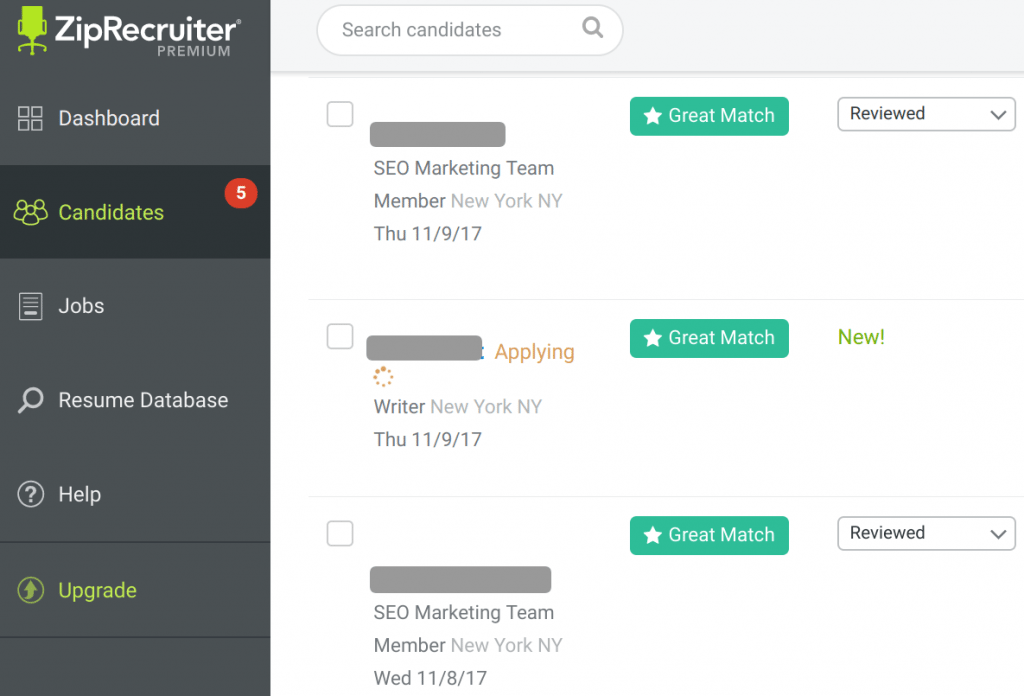

For example, if two jobseekers have applied to many of the same jobs, that will strengthen ZipRecruiter’s assessment of their similarity. When one of them applies for a new job, and that employer gives that applicant a “thumbs up,” the other is more likely to be nudged to apply for that same job. If the second jobseeker does apply, that person’s application is marked for the employer with a “great match” badge, essentially reinforcing the employer’s initial screening decisions.

ZipRecruiter’s “Great Match” badges

According to the platform, its matching algorithm dramatically increases the fraction of preferable candidates in an applicant pool—at least in the eyes of a hiring manager. ZipRecruiter claims that without its algorithm, one in six applicants tends to get a thumbs up from an employer. But when its algorithm nudges “similar” candidates toward certain jobs, that rate increases to one in three applicants. One likely reason is that, as ZipRecruiter surfaces a job posting to jobseekers who are more likely to garner a thumbs up, it correspondingly suppresses the posting from others it deems less compatible.

ZipRecruiter uses similar algorithmic methods to filter jobs it displays to jobseekers, elevating certain openings based on their previous applications and other on-site activity and demoting others.

ZipRecruiter learns from user behavior

. . .

Job matching platforms like ZipRecruiter, and recommender systems more generally, present unique equity challenges. For one, tools that rely on attenuated proxies for “relevance” and “interest” could end up replicating the very cognitive biases they claim to remove. Content-based filtering can reinforce users’ own priors and cognitive biases. For example, if a woman with several years of experience tends to click on lower-level jobs because she doubts she is qualified for more senior positions, over time she may be shown fewer higher paying jobs than she would otherwise be qualified for. Collaborative filtering, on the other hand, risks stereotyping users because of the actions of others like them. For example, even if a woman frequently clicks on management positions herself, the system might learn that other, similar women tend to click on more junior positions, and might show her fewer management jobs than a similarly situated man—not due to her own preference, but because of the behavior of people the system deems to resemble her. Technical researchers are still trying to conceive of the right ways to benchmark and measure these systems, even outside of the hiring context.

Tools that rely on attenuated proxies for “relevance” and “interest” could end up replicating the very cognitive biases they claim to remove.

These effects can arise even when a recommender system does not explicitly consider protected characteristics, like race or sex. For example, when Netflix users noticed they were being shown content that appeared to be personalized by race, it was not because Netflix was collecting or explicitly inferring users’ race, but merely predicting users’ preferences using those users’ own behavior, and the behavior of others who appeared to have similar preferences. The same phenomenon can occur with hiring recommender systems, albeit less visibly.

Job matching platforms like ZipRecruiter and LinkedIn might fall between the cracks of existing legal protections. Here again, the role of technological platforms is ambiguous. On one hand, job postings on these platforms are clearly “notices or advertisements” under Title VII. However, platforms currently enjoy significant immunity from the conduct of other entities, such as employers, so it is not clear what legal obligations apply. The ACLU and others have argued that platforms can themselves be employment agencies and ought to be liable as such, but platforms contest this characterization. It is not even clear whether or when jobseekers using these tools would count as “applicants” under federal recordkeeping requirements, which were designed to help regulators monitor for disparate impact, even though some matching tools are making meaningful assessments about jobseekers’ qualifications before they explicitly apply for a particular role.

Headhunting

Headhunting is the practice of proactively reaching out to specific, qualified candidates. It is especially common when employers require specialized experience or are recruiting in competitive environments, often for higher-skill positions. Here, employers typically seek out “passive” candidates—that is, jobseekers who are either not aware of a particular job opening, or those who aren’t actively looking to leave their existing job or rejoin the workforce.

Entelo, a popular tool among Silicon Valley and technology sector employers, searches dozens of sources like LinkedIn, resume databases, and public social media and work portfolio profiles to surface potential candidates who may be receptive to individual outreach. In addition to visually displaying information about prospects’ skills and work history, Entelo makes several predictions about each potential candidate.

First, Entelo predicts whether someone is likely to move jobs, using data like whether she has recently updated her skills on LinkedIn, aggregate data about career trajectories in her field (for instance, how long employees tend to stay at the company where she currently works), and her current employer’s “health” (e.g., recent layoffs, mergers, and stock fluctuations).

Entelo flags certain people as being “More Likely to Move”

Entelo also scores candidates on “company fit,” a measure based on whether a candidate has worked in companies of a similar size or industry as the recruiter’s company, and whether others have defected from the candidate’s current employer to the company interested in recruiting her.

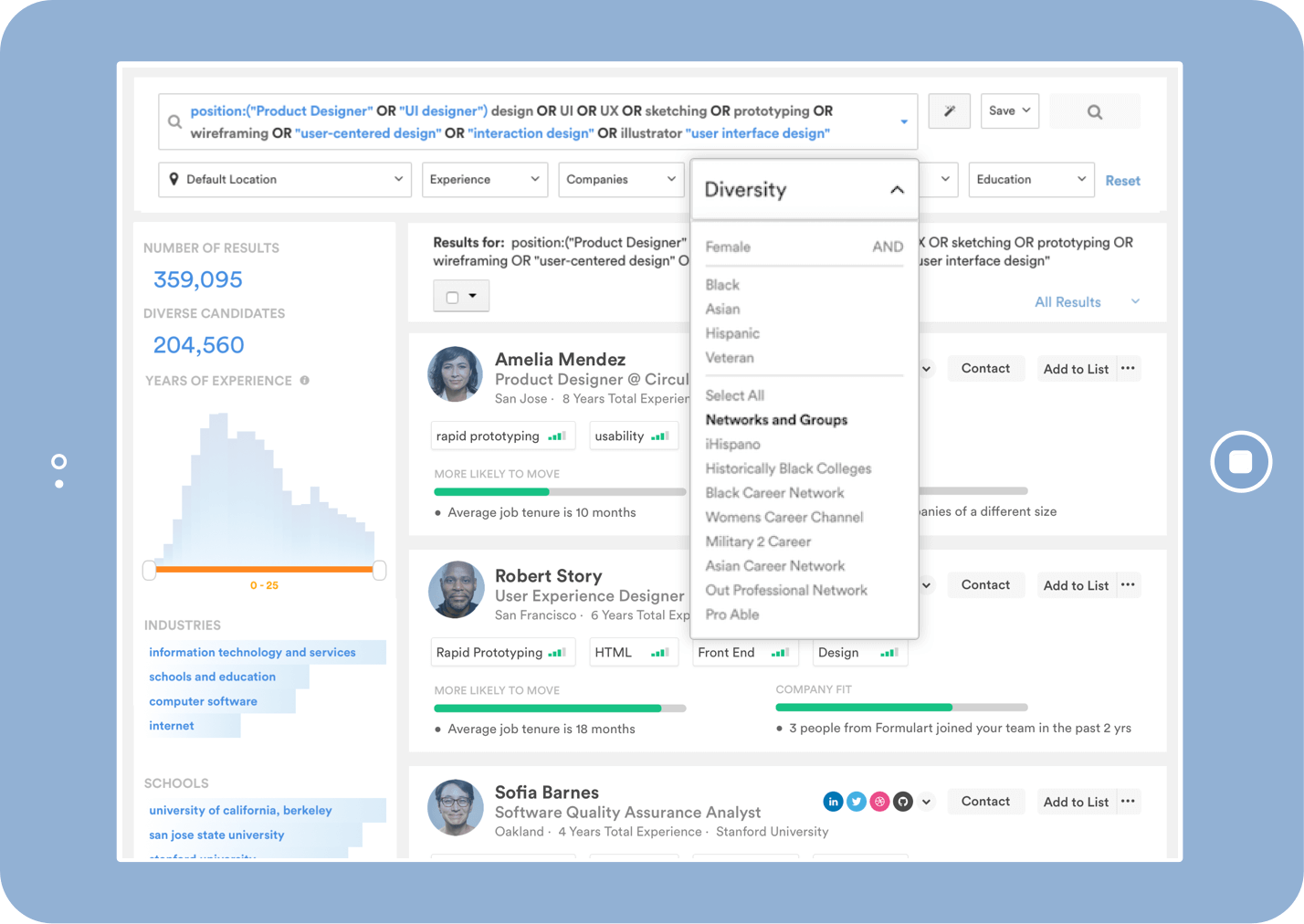

Notably, Entelo uses data analysis and prediction as a means to actively further employers’ diversity goals in several ways. First, the company predicts whether someone is a “diversity” candidate—for instance, a person of color, woman, or veteran—based on candidates’ public affiliations with sororities, clubs, historically Black colleges, or special interest honor societies. Employers actively looking to recruit diverse candidates can use these predicted labels to search for them within Entelo’s database of passive candidates. And importantly, employers cannot use those categories to exclude candidates from a search. Employers can also opt to use “Unbiased Sourcing Mode,” which obscures personal, sensitive, and protected characteristics from the interface as they review candidates.

Recognizing that women and minority candidates may not use the same language or list the same skills on their resumes and online profiles as other candidates, Entelo offers a feature called “peer-based skills” that uses machine learning to compare profiles and predict skills a candidate is likely to have but may not have explicitly listed. Finally, Entelo offers employers reports that provide basic race and gender breakdowns for the candidates whom that employer has searched for and engaged on the platform.

Entelo’s diverse candidate search function

LinkedIn also offers employers headhunting tools that rely on predictive indicators. Once recruiters select filters for candidates who have specific skills, LinkedIn returns a list of candidate profiles ranked by their “likelihood of being hired”—a measure the platform calculates using signals like whether a user is open to moving jobs, whether she follows the employer’s LinkedIn profile, and whether she is likely to respond to a message from a recruiter. The ranking also takes into account whether the candidate is from a region, industry, or company that the recruiter tends to prefer.

LinkedIn search results, sorted by “relevance”

Recently, LinkedIn updated its recruiter tools to balance the gender distribution in candidate search results, rather than sorting candidates purely by “relevance.” With this update, if the pool of potential candidates who fit given search parameters reflects a certain proportion of women, the platform will re-rank candidates so that every page of search results reflects that proportion. The company also plans to offer employers reports that track the gender breakdown of their candidates across several stages of the recruitment process, as well as comparisons to the gender makeup of peer companies.

. . .

Headhunting tools present some of the same fundamental concerns as matching tools. Rather than predicting more direct signals of “job success,” they often end up predicting recruiter or jobseeker actions, which can amplify biased social behaviors. This can happen especially quickly when predictive models are updated dynamically, as in recommender systems. For example, if an employer tends to click on the profiles of male software engineers, not only might she be shown more male software engineers, but other recruiters seeking candidates for similar roles may also see more male software engineers.

Moreover, male software engineers may start seeing these web developer jobs at a greater rate than women, whose profiles are not being clicked on at the same rate. Without intervention, these effects could be amplified over time, since people can only act on profiles and jobs that they are shown. These tools don’t completely block recruiters from seeing certain types of candidates, or certain types of candidates from seeing certain jobs. But the cumulative effect of being buried several pages deep in search results could have similar effects.

There are also familiar legal ambiguities. Regulators lack clear guidelines to assess disparate impact. Nor is it clear whether the candidates considered by these tools are “applicants” for recordkeeping and assessment purposes.

Headhunting tools appear prone to explicitly prioritize measures of “company fit” or “likelihood of being hired” at that company. To some extent, these measures resemble analog assessments of “culture fit,” which might disadvantage applicants who have not had the opportunity to work in similar companies, despite their abilities.

There are some encouraging new practices in this class of technology. Entelo’s diversity-aware reporting tool could help employers identify their recruiting activities that may be biased against women and candidates of color. LinkedIn’s gender-aware candidate search results feature is another step in the right direction. Vendors should carefully consider expanding such an approach beyond gender, to ensure that other kinds of underrepresented candidates are surfaced more proportionally to the makeup of the underlying candidate pool. In addition, Entelo’s “peer-based skills” feature, which augments the skills on a candidate’s profile, claims to lift up qualified female candidates. In theory, such a function could do so, but the company’s public statements about the feature are not detailed enough for us to confidently say that the tool works as described.

Screening

In the screening stage, employers formally begin reviewing applications, rejecting unqualified or relatively weak applicants and prioritizing the remainder for closer consideration. Here, predictive technologies assess, score, and rank applicants according to their qualifications, soft skills, and other capabilities to help hiring managers decide who should move on to the next stage. These tools help employers quickly whittle down their applicant pool so they can spend more time considering the applicants deemed to be strongest. A substantial number of job applicants are automatically or summarily rejected during this stage.

Qualifications

Many employers will consider applicants’ existing qualifications, such as prior experience in a given role, certifications, or proficiency with particular software systems. In some contexts—like retail and service sectors—nearly all minimally qualified candidates may be offered employment. For lower-volume recruitment, meeting hard qualification requirements is a prerequisite for more in-depth consideration.

Many simple applicant tracking systems offer features to screen out applicants who don’t appear to have the minimum requirements or skills, based on lists of predefined questions or keywords, often called “knockout questions.” However, more advanced tools, such as interactive online tests or software tools that automatically analyze written answers, aim to improve the traditional screening process using more sophisticated analysis.

One example is Mya, a chatbot that allows employers to engage with jobseekers in an interactive manner. Chatbots like Mya are gaining popularity as tools to automate the screening process, particularly for employers trying to fill high-volume, high-turnover jobs. Like traditional job application software, Mya asks jobseekers basic screening questions. The tool does not appear to make nuanced predictions about candidates, but rather interprets written answers to predefined questions and responds in a conversational manner.

Mya can begin interacting with jobseekers before they submit formal applications, answering initial questions by chat, text message, and email. The bot extracts key details from text-based conversations using natural language processing (NLP), and then uses basic decision trees to determine the appropriate response and action.

The Mya chatbot can pre-screen candidates

When Mya determines that candidates meet an employer’s predefined requirements, it automatically passes them directly to the next stage of the process or puts them in touch with a human recruiter. If the bot detects candidates that are a “poor-fit,” it can be configured to preemptively discourage them from applying for a job, “reject[ing] candidates gently, suggesting other job openings they may be qualified for and/or inviting them to register in the talent pool.”

Other screening tools help recruiters look beyond keywords and pre-set questions, such as reviewing applicants’ resumes automatically using machine learning techniques.

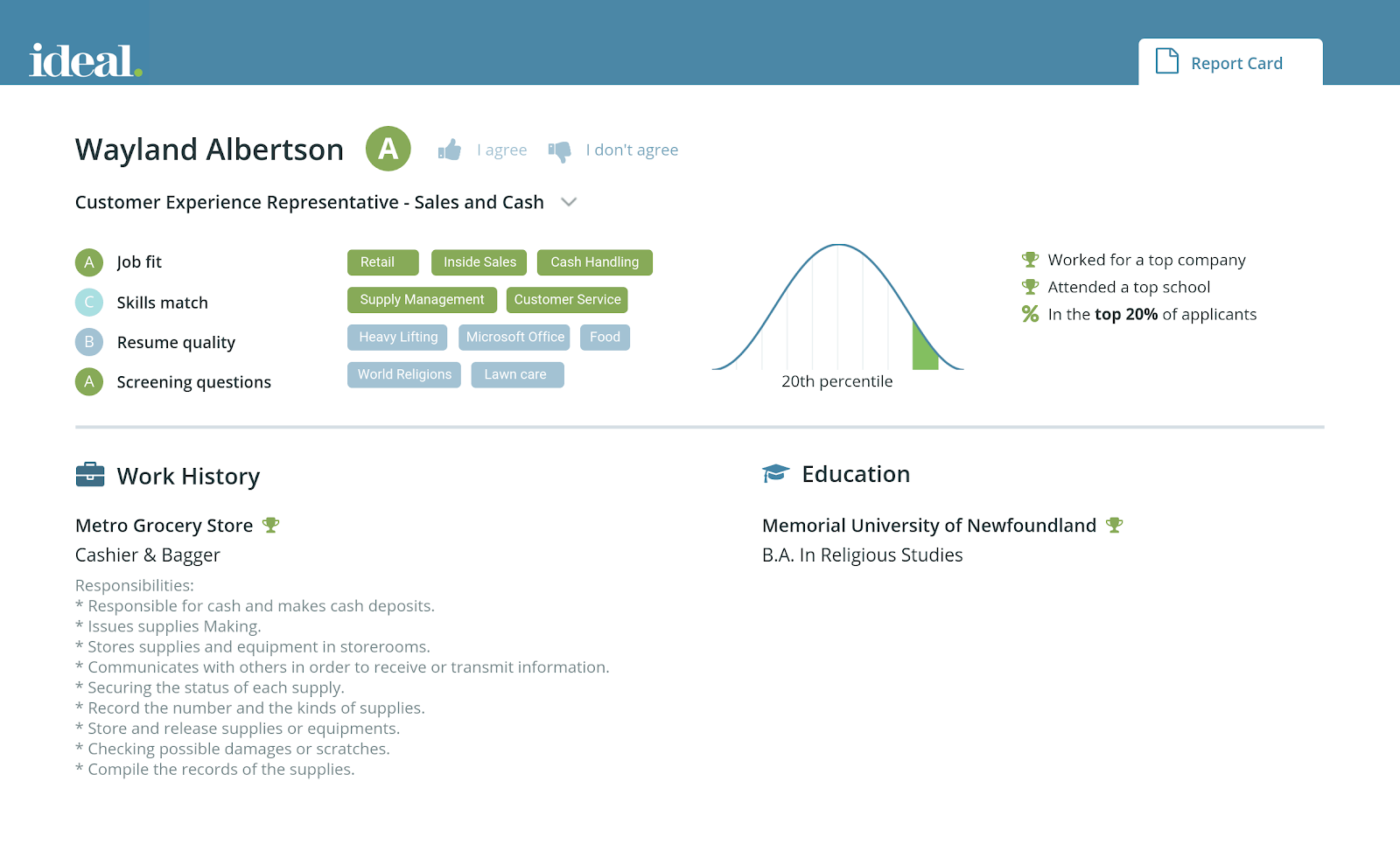

One such tool, Ideal, predicts how closely an applicant’s resume matches the employer’s minimum and preferred qualifications. Ideal extracts and interprets the text of an applicant’s resume and, based on that employer’s past screening and hiring decisions, assigns the applicant a letter grade, from A to D.

Ideal’s dashboard displays letter grades and other predicted details

Ideal allows hiring managers to give feedback to its screening algorithm, by indicating whether they “agree” or “don’t agree” with the assessment of a particular applicant.

. . .

Tools like Mya and Ideal offer employers ways to more efficiently screen large applicant pools with relatively standardized procedures. In theory, such processes could benefit qualified candidates who might have been accidentally ignored or screened out by strict knockout questions, or due to resource limitations or interpersonal biases. Unsurprisingly, both companies highlight the fact that their software does not explicitly consider factors like race, gender, or socioeconomic status.

When screening systems aim to replicate an employer’s prior hiring decisions, the resulting model will very likely reflect prior interpersonal, institutional, and systemic biases.

When screening systems aim to replicate an employer’s prior hiring decisions, as Ideal does, the resulting model will likely reflect prior interpersonal, institutional, and systemic social biases. Although it might seem natural for screening tools to consider previous hiring decisions, those decisions often reflect the very patterns many employers are actively trying to change through diversity and inclusion initiatives. Workplace performance data, while itself at risk of reflecting similar biases, may at least surface nontraditional signals of likely success an employer has not previously considered.

Moreover, although natural language processing techniques have advanced in recent years, researchers have found that NLP systems trained on real-world data can quickly absorb society’s racial and gender biases. One study found, for example, that NLP tools learned to associate African-American names with negative sentiments, while female names were more likely to be associated with domestic work than professional or technical occupations. Limitations in the diversity of NLP training data mean they may perform poorly with candidates who have regional or cultural dialects, or for whom English is a second language. Tools that rely on NLP could therefore reflect “expected” linguistic patterns and, as such, could misunderstand, penalize, or even unfairly screen out minority candidates. Some researchers are seeking to develop more inclusive models, but such research is still in its infancy.

Finally, while chatbots used in hiring today appear to be relatively simple—following a pre-approved script—future hiring chatbots might be given more flexibility. If vendors begin to experiment with chatbots that learn from social interactions with users, they will need to take care that they don’t autonomously parrot user-generated misbehavior and prejudices.

Assessments

Many employers, particularly larger employers, use pre-employment assessments to measure aptitude, skills, and personality traits to differentiate potential top performers from other applicants. Today’s assessment tools, which often build on these traditional tests, are appealing for employers who want to spot the strongest candidates among a large pool of qualified candidates.

Predictive assessment tools are just emerging, but they are quickly gaining popularity. Some vendors offer “off-the-shelf” assessments for a variety of job functions (like customer service, sales, and project management) and competencies (like “problem solving” and “interpersonal skills”). For example, job board website Indeed offers a library of such tests that employers can include in their online job applications. Applicants take the tests during the online application process, which Indeed automatically scores “with the help of machine learning.” These ready-made assessments are intended to predict generic job performance and aren’t specific to a given employer or applicant pool.

Other vendors offer custom-built assessments for particular employers, and for specific roles. These bespoke assessments use the employer’s workforce and performance data to predict how new applicants may compare to current “successful” employees.

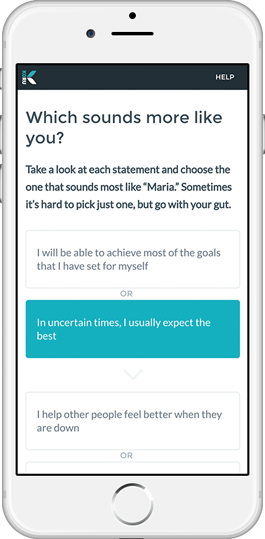

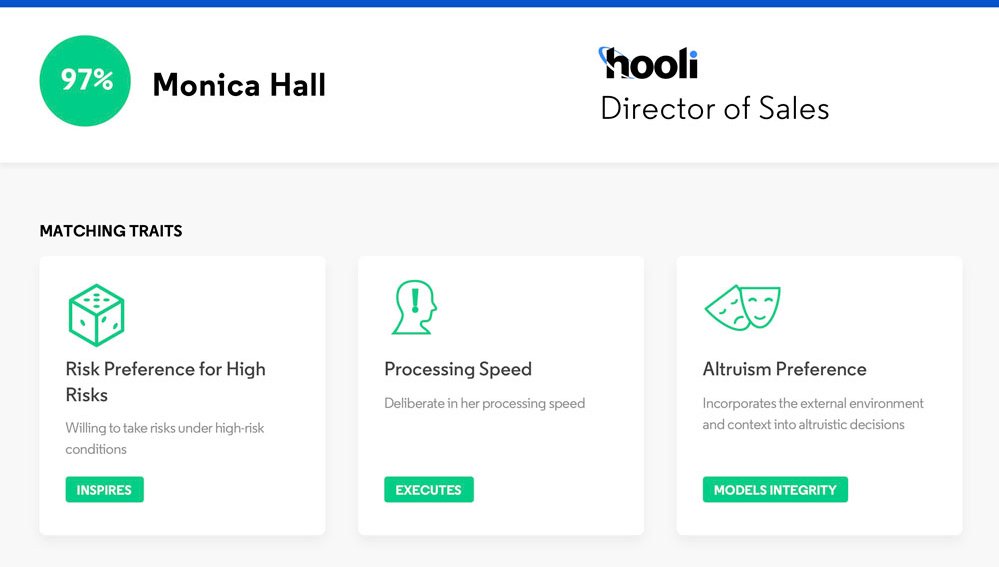

One vendor, Koru, offers an assessment tool that infers candidates’ personality traits to predict future job performance. The tool poses questions to candidates through a self-assessment survey, and based on their answers, scores candidates on personal attributes like “grit,” “rigor,” and “teamwork,” as well as their predicted alignment with an employer’s desired traits.

Koru’s self-assessment interface

To determine the desired trait profile for a specific employer, Koru has a group of existing employees complete its assessment, collecting several hundred data points per employee. It cross-references that information with the employer’s own performance indicators for those employees (like employee reviews, promotions, or sales numbers) to identify the personality traits that most differentiate a company’s high performers from its low performers. The result is a “fingerprint” for a specific position—that is, the particular mix of personality traits that Koru finds to be most correlated with success on the job, against which future applicants are evaluated.

For each new applicant, the employer receives an overall percentage “fit” score, as well as individual scores for specific characteristics and priority skills.

Koru’s assessment results, including a “predictive fingerprint”

Based on candidates’ predicted fit scores, Koru sorts them for review, and the employer can filter the list of candidates by “low,” “medium,” and “high” fit, by specific strengths, and by standard resume information like college, major, and prior work experience.

Like many other predictive hiring tools, Koru scores and ranks candidates

The company has mentioned on several occasions that their tests have been validated and evaluated for adverse impact on women and minority candidates, but it does not disclose its methods nor the results of its analysis.

Like Koru, other vendors seek to assess candidates’ personality traits, but rather than asking candidates to fill out a survey—which candidates could fill out inaccurately—they offer games and interactive activities that purport to measure candidates’ behaviors more directly.

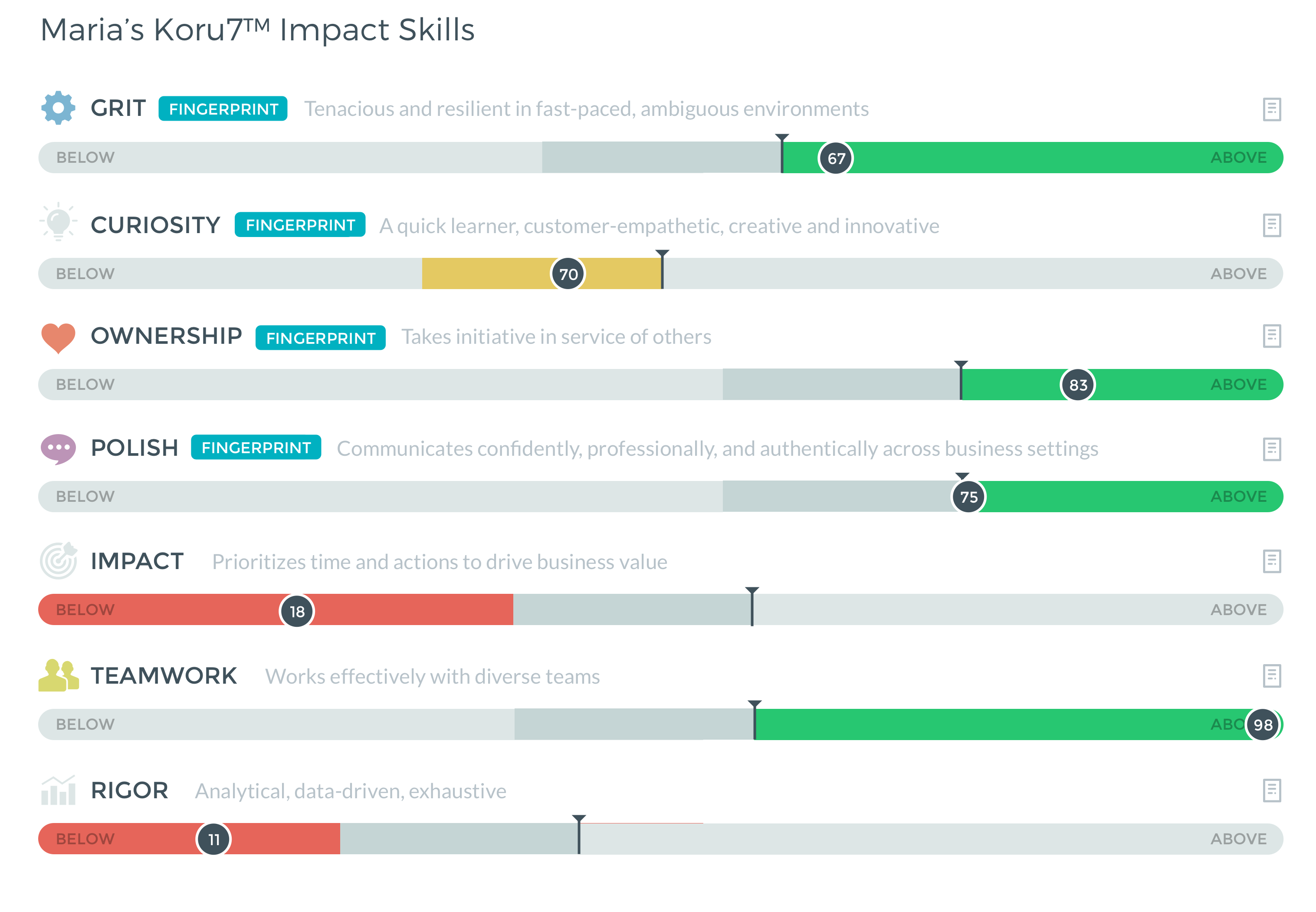

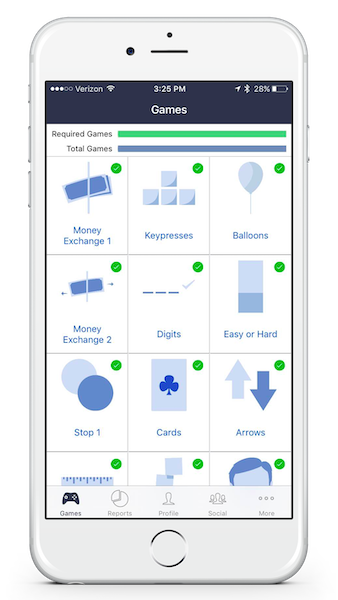

Pymetrics is one prominent vendor that offers “neuroscience” web and mobile games to measure cognitive, social, and emotional traits of candidates, such as processing speed, memory, and perseverance. For instance, one of their games flashes red and green dots on the screen and asks players to click when they see a red dot. The game appears to measure candidates’ reaction times, but in fact is used to assess candidates’ impulsivity, attention span, and ability to learn from mistakes.

Pymetrics’ interactive games

Like Koru, Pymetrics builds custom predictive models for each employer and for specific positions. Before doing so, the company starts by gathering data from tens of thousands of people (not specific to the employer) in order to distill baseline “trait profiles” for different types of game players. The employer then asks current employees to play many of Pymetrics’ stock games. To build a predictive model, Pymetrics applies machine learning techniques to determine which traits—as measured by its games—best differentiate the employer’s top performers from its other employees. Of course, for this to work, the employer needs to tell Pymetrics who it considers to be its top performers, based on whatever metrics the employer is already using to assess its employees.

When the Pymetrics model is ready, the employer asks each new job candidate to play the games. Based on their game play, Pymetrics calculates a percentage score for each candidate, indicating how well that candidate matches with the employer’s desired suite of traits for the job.

Pymetrics’ assessment results

Candidates whose scores fail to meet the employer’s predefined threshold are automatically rejected for the specific role. Interestingly, if the employer is hiring for multiple roles, Pymetrics offers a “common application”-style service, redirecting candidates to other open roles with the same employer, or elsewhere, for which their inferred traits appear to be a better match.

Pymetrics is adamant that its assessments comply with U.S. legal requirements. The company appears to be aware that how employers currently assess “top” performers is very likely to be biased along gender and racial lines, and that such biases could easily be reflected in their resulting models.

Pymetrics does offer some public explanation regarding the steps it takes to “de-bias,” or mitigate observed disparities in, its models. The company explains that they use statistical techniques to remove obvious demographic biases when evaluating behavioral traits. It also tests its models for differential impact along gender and racial lines. When statistical disparities are detected, Pymetrics apparently further adjusts its models in an attempt to compensate, though they do not describe the details of this stage of the process. In May 2018, Pymetrics publicly released the source code of an internal tool it developed to identify biases in its own models. While this is a worthwhile step, it does not make the models that it develops for employers available for external, independent auditing.

. . .

Pre-employment tests have a deeply troubled history, and have long been decried as being inherently discriminatory against both people of color and people with disabilities. The newest assessment offerings raise similar questions and concerns about validation, structural biases, and their influence on human decision-making.

Tools like Koru and Pymetrics exemplify some of the most fundamental concerns about predictive technology used in hiring. The very act of differentiating high performers from low performers often reflects subjective evaluations, which is a notorious source of discrimination. Models based on these practices can mirror undesirable social patterns. Even when these tools accurately infer traits that current, successful employees share, they could easily turn away equally talented candidates who don’t happen to share those characteristics. Inferred traits may not actually have any causal relationship with performance, and at worst, could be entirely circumstantial. Tools with “common application” features could rely on such traits to unfairly redirect certain candidates to lower status jobs.

It is not clear that existing legal best practices apply to, or provide an effective check on, predictive assessment tools.

It is not clear that existing legal best practices apply to, or provide an effective check on, these tools. The EEOC’s guidelines for “tests and other selection procedures” say that these tests and procedures should be “validated”—that is, shown to be sufficiently related to or predictive of job performance. Perhaps because of this guidance, most bespoke assessment tools we observed, including Koru and Pymetrics, are not built to incorporate feedback in real time, updating themselves as more candidates are considered and hired. Rather, the models appear to be created more deliberately, with distinct models built for each position and each employer. Moreover, because machine learning tools enable employers to correlate nearly any test to some aspect of job performance, existing validation guidelines may be ill-equipped to prevent discriminatory outcomes.

Validation notwithstanding, such tools (and most personality tests) are built on fundamental psychological theories of human behavior that reflect particular historical and social patterns. Applicants of different genders or from different cultural backgrounds could describe themselves or act differently, for instance, even if they have similar competencies. Many psychology and behavioral research studies have relied on college students as subjects, and researchers have questioned whether those studies can truly be generalized to wider populations. New social science research methods, like those that use online crowdsourcing techniques, allow researchers to access a wider diversity of subjects, but such methods present their own unique experimental validity and ethical challenges. Either way, such tests could penalize jobseekers who don’t fit a traditional mold, especially those with disabilities.

Also concerning is the fact that many assessment systems assign candidates specific, numerical “fit” scores, and then rank and display candidates to recruiters according to those scores. This can create the perception of substantial difference between candidates where there may be little, if any. The problem is especially stark when (as is common) predictive models are based on employee performance data, which employers often admit, at least in casual settings, are of poor quality. Even for candidates who pass an initial screening round, these numbers and rankings create an illusion of statistical accuracy and specificity that could color how recruiters view candidates during the remainder of the hiring process.

Finally, the information that’s displayed to employers by a tool’s user interface can have subtle but powerful effects on hiring outcomes. For instance, recruiters will likely focus first on candidates with the very highest scores. But if black and white candidates pass an assessment at equivalent rates, and if black candidates on average tend to receive marginally lower passing scores than white candidates, black candidates will likely fare worse over time. One vendor, Applied, demonstrates a promising approach by randomizing the order in which candidate materials are shown to human reviewers.

Interviewing

In the interview stage, employers interact directly with individual applicants, and hiring decisions often crystalize at this stage. Emerging tools at this stage claim to measure applicants’ performance in video interviews, by automatically analyzing verbal responses, tone, and even facial expressions. Employers might use these tools to save interviewers time, relieve scheduling burdens, and standardize what is often seen as an inescapably subjective part of the hiring process.

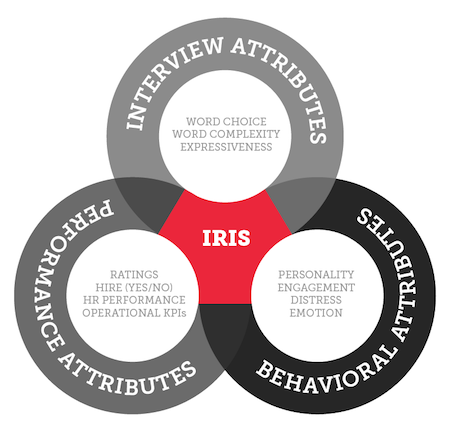

One prominent video interviewing company, HireVue, lets employers solicit recorded interview answers from applicants, and then “grades” these responses against interview answers provided by current, successful employees.

HireVue analyzes facial expressions, language patterns, and audio cues

More specifically, HireVue’s tool parses videos using machine learning, extracting signals like facial expression and eye contact, vocal indications of enthusiasm, word choice, word complexity, topics discussed, and word groupings. It uses these signals to create a model that claims to capture relationships between interview responses and workplace performance, based on the employer’s preexisting metrics.

HireVue’s description of the data included in its models

As new candidates submit responses for an open role, HireVue uses these models to produce an “insight score” of 0-100 for each candidate. Employers can choose to automatically pass high-scoring candidates along for further review. Inversely, candidates who score below a certain threshold can be automatically rejected.

HireVue says it tests the models it creates for certain kinds of bias. For example, HireVue claims to test each model on different demographic subgroups in order to detect adverse impact on the basis of gender, race, and age. If such bias within the model is detected, the company explains that it identifies the specific factors in the model that contribute to those differences and removes them before retraining, validating, and deploying the new model. Once an employer begins accepting applications, the model is periodically checked for both accuracy and adverse impact.

. . .

There is significant public concern about video interviewing systems like HireVue, and for good reasons. Speech recognition software can perform poorly, especially for people with regional and nonnative accents. Facial analysis systems can struggle to read the faces of women with darker skin. Both kinds of systems are likely to improve over time, as new and more inclusive data sets become available.

But the critiques go deeper than accuracy. Some skeptics question the legitimacy of using physical features and facial expressions that have no credible, causal link with workplace success, to make or inform hiring decisions. Tests that have the effect of considering someone’s immutable characteristics—even if they do so in a facially legal way—may violate expectations of dignity and justice, and prevent candidates from making a good-faith effort to demonstrate their suitability for a job. Moreover, some worry that interviewees might be rewarded for irrelevant or unfair factors, like exaggerated facial expressions, and penalized for visible disability or speech impediments.

Even if affirmative selection decisions are made by humans, automated rejections are still concerning.

In response to these critiques, HireVue, like many other vendors, points out that it does not make any decisions about whom to hire, but merely helps to inform human recruiters. But even if affirmative selection decisions are made by humans, automated rejections are still concerning. On the bright side, HireVue’s software at least appears to allow employers to hide its automatically generated “insight score” from subsequent reviewers, potentially mitigating overreliance on its measurements further along in the hiring process.

While HireVue seems to take some steps to remove bias from the models it creates, the company hasn’t shared many details about how it does so. Absent further transparency, advocates and regulators cannot fully assess the efficacy of their efforts.

What About De-Biasing?

In recent years, academic and industry researchers have been working to develop techniques to “de-bias” predictive models. These techniques often involve testing for disparate outcomes (using collected or inferred protected characteristics) and then adjusting the model’s behavior accordingly.

However, best practices have yet to crystallize. Many techniques maintain a narrow focus on individual protected characteristics like gender or race, and rarely address intersectional concerns, where multiple protected traits produce compounding disparate effects. The issue itself is only starting to emerge as a research focus in the computer science community.

Some hiring technology vendors seem to have embraced de-biasing methods to address racial and gender discrimination, which should be encouraged and celebrated. However, there is still more work to do: We did not identify any vendor that appeared to assess adverse impact based on other sensitive features, like religion, national origin, disability, or sexual orientation, which could just as easily emerge when predictive tools are used.

Bias testing in hiring tools today is almost always opaque to the public, performed internally by companies, and lacking independent validation, making the results of internal tests and vendor claims difficult to verify or challenge.

In sum, the development and deployment of de-biasing techniques are promising and will likely play an important role in the future of predictive hiring technology. But there are limits: Some predictors of “success” may be so entwined with protected attributes that de-biasing will be insufficient. In these cases, other kinds of equity-promoting interventions will be needed.

Selection

In the selection stage, employers make final hiring decisions, which might include background checks and negotiation of offer terms. Here, hiring tools aim to predict whether candidates might violate workplace policies, or to estimate what mix of salary and other benefits to offer. Employers who use these tools often seek to increase their “yield” of new hires from extended offers, on terms favorable to the employer. For applicants, this is a critical moment of negotiation.

Background Checks

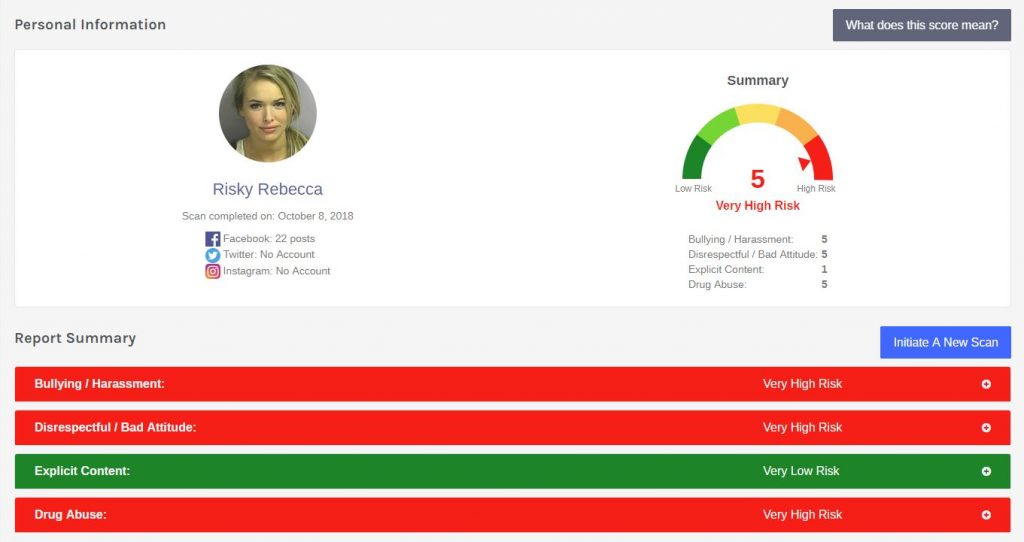

Employers commonly run pre-employment background checks, most often to determine if an applicant has a criminal history or if they are authorized to work. Automated background checks have long concerned civil rights advocates, who highlight the fact these systems tend to have a disproportionate negative impact on workers of color, immigrants, and women. Today, few employers use predictive technology in a way that changes the nature of background checks—but a few companies are trying to change that.

One background check vendor, Fama, offers employers a service to flag candidates at risk of engaging in sexual harassment, workplace violence, and other “toxic behavior.” Fama says it makes these assessments based on public online content, like social media posts, using automated content analysis tools.